Blog , p.2

Since the PostgreSQL 17 RC1 came out, we are on a home run towards the official PostgreSQL release, scheduled for September 26, 2024.

Letʼs take a look at the patches that came in during the March CommitFest. Previous articles about PostgreSQL 17 CommitFests: 2023-07, 2023-09, 2023-11, 2024-01.

Together, these give an idea of what the new PostgreSQL will look like.

Reverts after code freeze

Unfortunately, some previously accepted patches didn't make it in after all. Some of the notable ones:

- ALTER TABLE... MERGE/SPLIT PARTITION (revert: 3890d90c)

- Temporal primary, unique and foreign keys (revert: 8aee330a)

- Planner: remove redundant self-joins (revert: d1d286d8)

- pg_constraint: NOT NULL constraints (revert: 16f8bb7c1)

Now, letʼs get to the new stuff.

SQL commands

- New features of the MERGE command

- COPY ... FROM: messages about discarded rows

- The SQL/JSON standard support

Performance

- SLRU cache configuration

- Planner: Merge Append for the UNION implementation

- Planner: materialized CTE statistics (continued)

- Optimization of parallel plans with DISTINCT

- Optimizing B-tree scans for sets of values

- VACUUM: new dead tuples storage

- VACUUM: combine WAL records for vacuuming and freezing

- Functions with subtransactions in parallel processes

Monitoring and management

- EXPLAIN (analyze, serialize): data conversion costs

- EXPLAIN: improved display of SubPlan and InitPlan nodes

- pg_buffercache: eviction from cache

Server

- random: a random number in the specified range

- transaction_timeout: session termination when the transaction timeout is reached

- Prohibit the use of ALTER SYSTEM

- The MAINTAIN privilege and the pg_maintain predefined role

- Built-in locale provider for C.UTF8

- pg_column_toast_chunk_id: ID of the TOAST value

- pg_basetype function: basic domain type

- pg_constraint: NOT NULL restrictions for domains

- New function to_regtypemod

- Hash indexes for ltree

Replication

- pg_createsubscriber: quickly create a logical replica from a physical one

- Logical slots: tracking the causes of replication conflicts

- pg_basebackup -R: dbname in primary_conninfo

- Synchronization of logical replication slots between the primary server and replicas

- Logical decoding optimization for subtransactions

Client applications

- libpq: non-locking query cancellation

- libpq: direct connection via TLS

- vvacuumdb, clusterdb, reindexdb: processing individual objects in multiple databases

- reindexdb: --jobs and --index at the same time

- psql: new implementation of FETCH_COUNT

- pg_dump --exclude-extension

- Backup and restore large objects

Spring is in full swing as we bring you the hottest winter news of the January Commitfest. Let’s get to the good stuff right away!

Previous articles about PostgreSQL 17: 2023-07, 2023-09, 2023-11.

- Incremental backup

- Logical replication: maintaining the subscription status when upgrading the subscriber server

- Dynamic shared memory registry

- EXPLAIN (memory): report memory usage for planning

- pg_stat_checkpointer: restartpoint monitoring on replicas

- Building BRIN indexes in parallel mode

- Queries with the IS [NOT] NULL condition for NOT NULL columns

- Optimization of SET search_path

- GROUP BY optimization

- Support planner functions for range types

- PL/pgSQL: %TYPE and %ROWTYPE arrays

- Jsonpath: new data conversion methods

- COPY ... FROM: ignoring format conversion errors

- to_timestamp: format codes TZ and OF

- GENERATED AS IDENTITY in partitioned tables

- ALTER COLUMN ... SET EXPRESSION

The November commitfest is ripe with new interesting features! Without further ado, let’s proceed with the review.

If you missed our July and September commitfest reviews, you can check them out here: 2023-07, 2023-09.

- ON LOGIN trigger

- Event triggers for REINDEX

- ALTER OPERATOR: commutator, negator, hashes, merges

- pg_dump --filter=dump.txt

- psql: displaying default privileges

- pg_stat_statements: track statement entry timestamps and reset min/max statistics

- pg_stat_checkpointer: checkpointer process statistics

- pg_stats: statistics for range type columns

- Planner: exclusion of unnecessary table self-joins

- Planner: materialized CTE statistics

- Planner: accessing a table with multiple clauses

- Index range scan optimization

- dblink, postgres_fdw: detailed wait events

- Logical replication: migration of replication slots during publisher upgrade

- Replication slot use log

- Unicode: new information functions

- New function: xmltext

- AT LOCAL support

- Infinite intervals

- ALTER SYSTEM with unrecognized custom parameters

- Building the server from source

We continue to follow the news of the PostgreSQL 17 development. Let’s find out what the September commitfest brings to the table.

If you missed our July commitfest review, you can check it out here: 2023-07.

- Removed the parameter old_snapshot_threshold

- New parameter event_triggers

- New functions to_bin and to_oct

- New system view pg_wait_events

- EXPLAIN: a JIT compilation time counter for tuple deforming

- Planner: better estimate of the initial cost of the WindowAgg node

- pg_constraint: NOT NULL constraints

- Normalization of CALL, DEALLOCATE and two-phase commit control commands

- unaccent: the target rule expressions now support values in quotation marks

- COPY FROM: FORCE_NOT_NULL * and FORCE_NULL *

- Audit of connections without authentication

- pg_stat_subscription: new column worker_type

- The behaviour of pg_promote in case of unsuccessful switchover to a replica

- Choosing the disk synchronization method in server utilities

- pg_restore: optimization of parallel recovery of a large number of tables

- pg_basebackup and pg_receivewal with the parameter dbname

- Parameter names for a number of built-in functions

- psql: \watch min_rows

We continue to follow the news in the world of PostgreSQL. The PostgreSQL 16 Release Candidate 1 was rolled out on August 31. If all is well, PostgreSQL 16 will officially release on September 14.

What has changed in the upcoming release after the April code freeze? What’s getting into PostgreSQL 17 after the first commitfest? Read our latest review to find out!

PostgreSQL 16

For reference, here are our previous reviews of PostgreSQL 16 commitfests: 2022-07, 2022-09, 2022-11, 2023-01, 2023-03.

Since April, there have been some notable changes.

Let’s start with the losses. The following updates have not made it into the release:

- MAINTAIN — a new privilege for table maintenance (commit: 151c22de

- Setting parameter values at the database and user level (commit: b9a7a822)

Some patches have been updated:

...

PostgreSQL 14 Internals for print on demand

A quick note to let you know that the PostgreSQL 14 Internals book is now available for orders on a worldwide print-on-demand service. You can buy hardcover or paperback version. PDF is still freely available on our website.

The end of the March Commitfest concludes the acceptance of patches for PostgreSQL 16. Let’s take a look at some exciting new updates it introduced.

I hope that this review together with the previous articles in the series (2022-07, 2022-09, 2022-11, 2023-01) will give you a coherent idea of the new features of PostgreSQL 16.

As usual, the March Commitfest introduces a ton of new changes. I’ve split them into several sections for convenience.

Monitoring

- pg_stat_io: input/output statistics

- Counter for new row versions moved to another page when performing an UPDATE

- pg_buffercache: new pg_buffercache_usage_counts function

- Normalization of DDL and service commands, continued

- EXPLAIN (generic_plan): generic plan of a parameterized query

- auto_explain: logging the query ID

- PL/pgSQL: GET DIAGNOSTICS .. PG_ROUTINE_OID

Client applications

- psql: variables SHELL_ERROR and SHELL_EXIT_CODE

- psql: \watch and the number of repetitions

- psql:\df+ does not show the source code of functions

- pg_dump: support for LZ4 and zstd compression methods

- pg_dump and partitioned tables

- pg_verifybackup --progress

- libpq: balancing connections

Server administration and maintenance

- initdb: setting configuration parameters during cluster initialization

- Autovacuum: balancing I/O impact on the fly

- Managing the size of shared memory for vacuum and analyze

- VACUUM for TOAST tables only

- The vacuum_defer_cleanup_age parameter has been removed

- pg_walinspect: interpretation of the end_lsn parameter

- pg_walinspect: pg_get_wal_fpi_info → pg_get_wal_block_info

Localization

- ICU: UNICODE collation

- ICU: Canonization of locales

- ICU: custom rules for customizing the sorting algorithm

Security

- libpq: new parameter require_auth

- scram_iterations: iteration counter for password encryption using SCRAM-SHA-256

SQL functions and commands

- SQL/JSON standard support

- New functions pg_input_error_info and pg_input_is_valid

- The Daitch-Mokotoff Soundex

- New functions array_shuffle and array_sample

- New aggregate function any_value

- COPY: inserting default values

- timestamptz: adding and subtracting time intervals

- XML: formatting values

- pg_size_bytes: support for "B"

- New functions: erf, erfc

Performance

- Parallel execution of full and right hash joins

- Options for the right antijoin

- Relation extension mechanism rework

- Don’t block HOT update by BRIN index

- postgres_fdw: aborting transactions on remote servers in parallel mode

- force_parallel_mode → debug_parallel_query

- Direct I/O (for developers only)

Logical replication

- Logical replication from a physical replica

- Using non-unique indexes with REPLICA IDENTITY FULL

- Initial synchronization in binary format

- Privileges for creating subscriptions and applying changes

- Committing changes in parallel mode (for developers only)

...

PostgreSQL 14 Internals

I’m excited to announce that the translation of the “PostgreSQL 14 Internals” book is finally complete thanks to the amazing work of Liudmila Mantrova.

The final part of the book considers each of the index types in great detail. It explains and demonstrates how access methods, operator classes, and data types work together to serve a variety of distinct needs.

You can download a PDF version of this book for free. We are also working on making it available on a print-on-demand service.

Your comments are very welcome. Contact us at edu@postgrespro.ru.

We continue to follow the news of the PostgreSQL 16 release, and today, the results of the fourth CommitFest are on the table. Let’s have a look.

If you missed the previous CommitFests, check out our reviews for 2022-07, 2022-09 and 2022-11.

Here are the patches I want to talk about this time:

- New function: random_normal

- Input formats for integer literals

- Goodbye, postmaster

- Parallel execution for string_agg and array_agg

- New parameter: enable_presorted_aggregate

- Planner support function for working with window functions

- Optimized grouping of repeating columns in GROUP BY and DISTINCT

- VACUUM parameters: SKIP_DATABASE_STATS and ONLY_DATABASE_STATS

- pg_dump: lock tables in batches

- PL/pgSQL: cursor variable initialization

- Roles with the CREATEROLE attribute

- Setting parameter values at the database and user level

- New parameter: reserved_connections

- postgres_fdw: analyzing foreign tables with TABLESAMPLE

- postgres_fdw: batch insert records during partition key updates

- pg_ident.conf: new ways to identify users in PostgreSQL

- Query jumbling for DDL and utility statements

- New function: bt_multi_page_stats

- New function: pg_split_walfile_name

- pg_walinspect, pg_waldump: collecting page images from WAL

We continue to follow the news of the upcoming PostgreSQL 16. The third CommitFest concluded in early December. Let's look at the results.

If you missed the previous CommitFests, check out our reviews: 2022-07, 2022-09.

Here are the patches I want to talk about:

- meson: a new source code build system

- Documentation: a new chapter on transaction processing

- psql: \d+ indicates foreign partitions in a partitioned table

- psql: extended query protocol support

- Predicate locks on materialized views

- Tracking last scan time of indexes and tables

- pg_buffercache: a new function pg_buffercache_summary

- walsender displays the database name in the process status

- Reducing the WAL overhead of freezing tuples

- Reduced power consumption when idle

- postgres_fdw: batch mode for COPY

- Modernizing the GUC infrastructure

- Hash index build optimization

- MAINTAIN ― a new privilege for table maintenance

- SET ROLE: better role change management

- Support for file inclusion directives in pg_hba.conf and pg_ident.conf

- Regular expressions support in pg_hba.conf

PostgreSQL 14 Internals, Part IV

I’m excited to announce that the translation of Part IV of the “PostgreSQL 14 Internals” book is published. This part delves into the inner workings of the planner and the executor, and it took me a couple of hundred pages to get through all the magic that covers this advanced technology.

You can download the book freely in PDF. The last part is yet to come, stay tuned!

I’d like to thank Alejandro García Montoro, Goran Pulevic, and Japin Li for their feedback and suggestions. Your comments are much appreciated. Contact us at edu@postgrespro.ru.

It’s official! PostgreSQL 15 is out, and the community is abuzz discussing all the new features of the fresh release.

Meanwhile, the October CommitFest for PostgreSQL 16 had come and gone, with its own notable additions to the code.

If you missed the July CommitFest, our previous article will get you up to speed in no time.

Here are the patches I want to talk about:

- SYSTEM_USER function

- Frozen pages/tuples information in autovacuum's server log

- pg_stat_get_backend_idset returns the actual backend ID

- Improved performance of ORDER BY / DISTINCT aggregates

- Faster bulk-loading into partitioned tables

- Optimized lookups in snapshots

- Bidirectional logical replication

- pg_auth_members: pg_auth_members: role membership granting management

- pg_auth_members: role membership and privilege inheritance

- pg_receivewal and pg_recvlogical can now handle SIGTERM

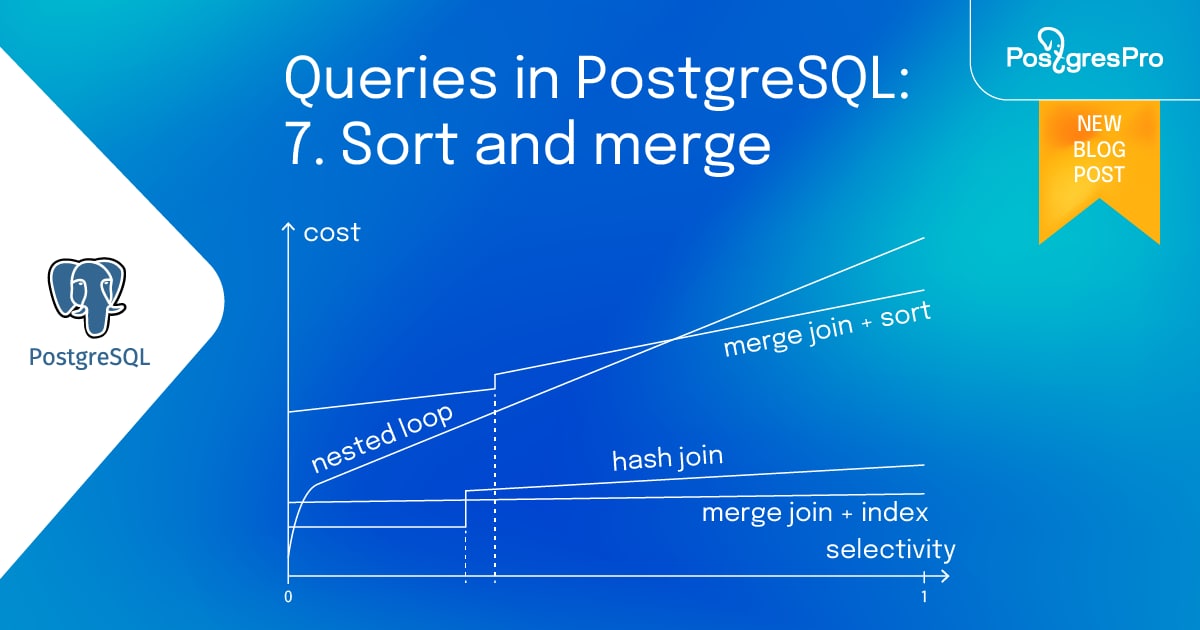

Queries in PostgreSQL: 7. Sort and merge

In the previous articles, covered query execution stages, statistics, sequential and index scan, and two of the three join methods: nested loop and hash join. This last article of the series will cover the merge algorithm and sorting. I will also demonstrate how the three join methods compare against each other.

PostgreSQL 14 Internals, Part III

I’m excited to announce that the translation of Part III of the “PostgreSQL 14 Internals” book is finished. This part is about a diverse world of locks, which includes a variety of heavyweight locks used for all kinds of purposes, several types of locks on memory structures, row locks which are not exactly locks, and even predicate locks which are not locks at all.

Please download the book freely in PDF. We have two more parts to come, so stay tuned!

Your comments are much appreciated. Contact us at edu@postgrespro.ru.